GFW Technical Review 12 – Hysteria

Every circumvention tool we have examined so far – from Shadowsocks to REALITY – runs over TCP. This is not a coincidence. TCP has been the dominant transport protocol on the internet for decades, and the entire TLS ecosystem is built on top of it. When the circumvention community adopted the strategy of “look like normal HTTPS,” that meant looking like TLS-over-TCP, because that is what HTTPS was.

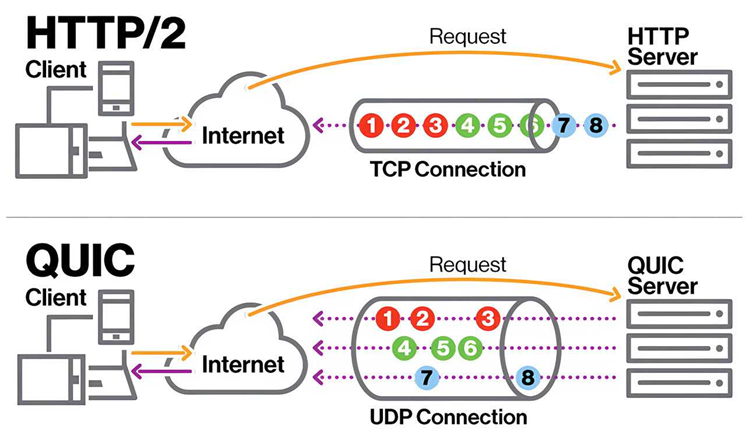

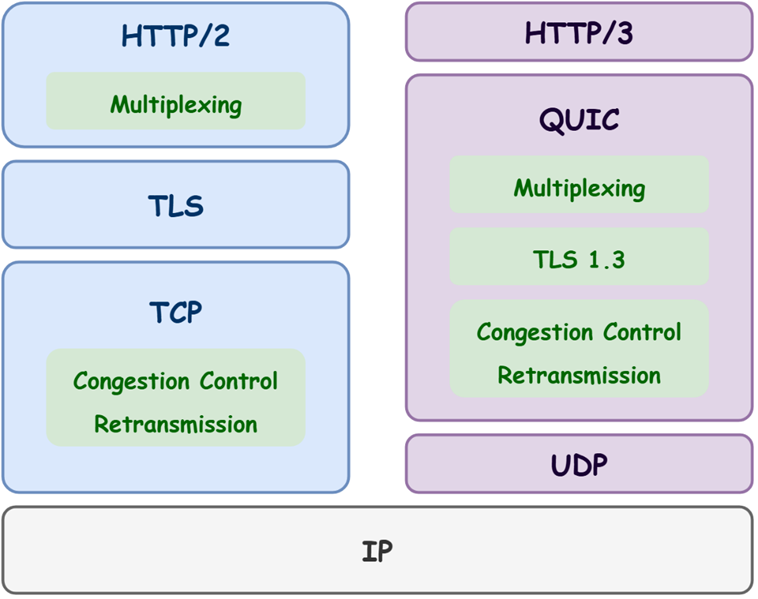

But HTTPS is changing. HTTP/3, the latest version of the protocol, abandons TCP entirely in favour of QUIC – a UDP-based transport developed by Google and standardised by the IETF in 2021. QUIC integrates TLS 1.3 directly into its handshake, supports multiplexed streams without head-of-line blocking, and reduces connection setup from TCP+TLS’s two or three round trips down to a single round trip – or even zero round trips for resumed connections. Major websites and services have adopted it rapidly: Google, YouTube, Facebook, Instagram, and Cloudflare all serve substantial portions of their traffic over QUIC. By some estimates, QUIC now carries over a third of global web traffic.

This shift matters for circumvention in two ways. First, it opens a new design space. A proxy protocol built on QUIC inherits properties – low-latency handshakes, built-in encryption, multiplexing, connection migration, custom congestion control – that TCP-based tools had to build from scratch or bolt on awkwardly. Second, it changes what “normal” traffic looks like. As QUIC becomes a larger share of everyday internet activity, a UDP-based proxy protocol is no longer the anomaly it once was. The cover story has changed.

Hysteria is the most prominent protocol to exploit this opportunity.

QUIC

Before examining Hysteria, it is worth understanding what QUIC provides, since Hysteria’s design decisions are best understood as extensions of – and deliberate departures from – QUIC’s architecture.

QUIC is a general-purpose transport protocol that runs over UDP. It was designed to solve several longstanding problems with the TCP+TLS stack:

- Head-of-line blocking. In TCP, a single lost packet stalls the entire byte stream until it is retransmitted. HTTP/2 tried to multiplex multiple streams over one TCP connection, but a loss in any stream blocked all of them. QUIC multiplexes streams independently at the transport layer – a lost packet in one stream does not affect the others.

- Handshake latency. A TCP connection requires a three-way handshake before data can flow. TLS adds another one or two round trips on top. QUIC combines the transport and cryptographic handshakes into a single round trip. For resumed connections, QUIC supports 0-RTT – the client can send application data in its very first packet.

- Connection migration. TCP connections are identified by a four-tuple (source IP, source port, destination IP, destination port). If the client’s IP changes – switching from Wi-Fi to mobile data, for instance – the TCP connection breaks. QUIC uses connection IDs instead, allowing a session to survive network changes seamlessly.

QUIC Censorship

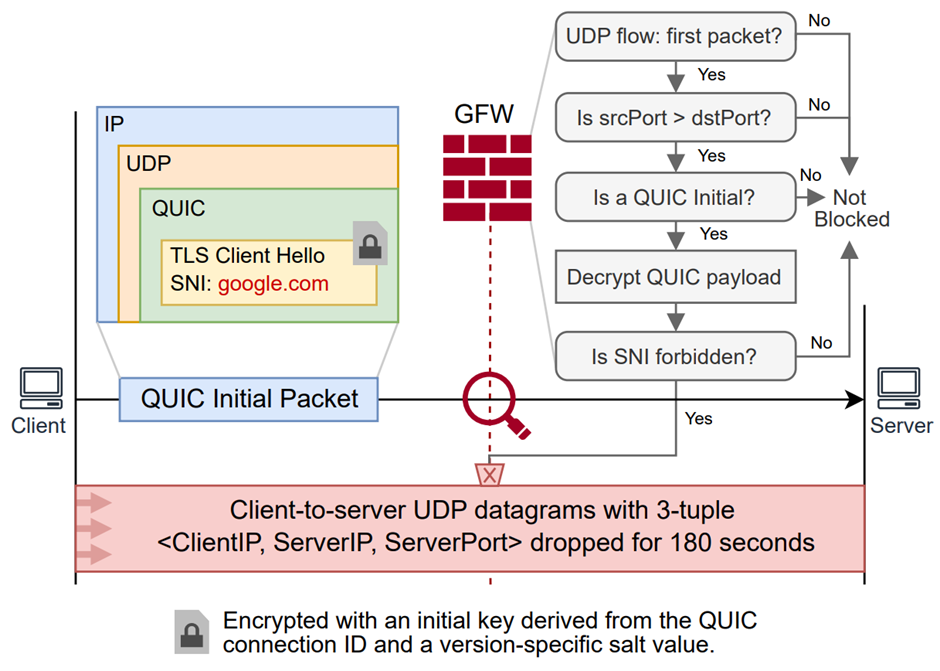

QUIC presents another major challenge to the GFW in the ongoing evolution of internet security. QUIC uses TLS 1.3, so the SNI field still exists in the ClientHello, allowing the GFW to continue performing SNI-based censorship and fingerprint analysis. However, several properties of QUIC make censorship more difficult than it is for TCP.

QUIC is not TCP. The GFW was fundamentally designed to handle TCP connections – its DPI analysis relies on TCP stream reconstruction, and its primary blocking mechanism is TCP RST injection. With UDP, the GFW no longer needs to perform stream reconstruction, which simplifies analysis and reduces the attack surface. However, there is no such thing as a UDP RST packet that the GFW can inject to terminate connections. The GFW therefore employs a hybrid of on-path and in-path blocking strategies for QUIC censorship. Ideally, as soon as it detects an offending QUIC packet, it drops that packet in-path. But this is extremely challenging at scale, as it requires deploying mass censorship infrastructure directly in the data path, increasing latency significantly. To compensate, the GFW often censors QUIC on-path as well: rather than dropping the first offending packet, it lets it through but relies heavily on residual censorship – a mechanism where the GFW blocks a connection tuple for an extended period (usually several minutes) after deciding to block. Even though the first datagram passes, all subsequent communication is dropped.

QUIC datagrams are encrypted from the very beginning. The first QUIC packet in the handshake – the Client Initial – is encrypted. This is not cryptographically secure encryption, of course, since the server must also be able to decrypt it. Any observer can decrypt the Client Initial by reading the Destination Connection ID (DCID) from the packet header in plaintext and applying a public, fixed salt. However, this means the GFW must decrypt every single Client Initial before performing analysis, which requires significantly more computational resources than inspecting plaintext TCP+TLS handshakes. Research has shown that by flooding QUIC Initial packets across the border, the GFW’s QUIC censorship infrastructure can be severely degraded, causing it to miss and fail to block a large volume of traffic.

The Congested Gateway

China’s internet was architected with very few international gateways, which handle the entire nation’s cross-border traffic. This was a benefit for the GFW, as it could be built around these choke points. But as internet usage grew, these gateways could not keep up with the rising load, crippling the quality of service for international traffic. This is compounded by the packet drops introduced by the GFW itself. For many years, the quality of international connections has been poor, with constant packet loss at the gateways – especially during peak hours. The state-owned carriers have shown little incentive to fix this problem.

These packet losses have a significant impact on user experience. TCP’s congestion control mechanism interprets packet loss as a signal of network congestion and throttles bandwidth accordingly. With constant packet drops at the international gateway, TCP’s congestion control continuously reduces throughput. UDP fares no better – while it has no congestion control, its quality of service is even worse because the international gateways tend to prioritise TCP packets over UDP datagrams, meaning UDP is more likely to be dropped during congested periods.

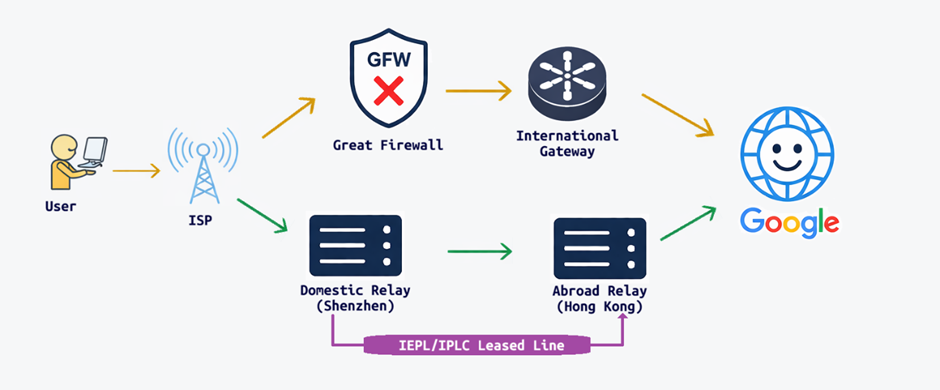

Businesses resort to IEPL or IPLC for better cross-border connectivity. IEPL (International Ethernet Private Line) and IPLC (International Private Leased Circuit) are privately leased lines with guaranteed speed and service-level objectives. They use dedicated international gateways, completely bypassing both the congested public gateways and the GFW. They are, however, expensive and require government licensing, so typically only sizable businesses use them.

Due to their speed, reliability, and complete bypass of the GFW, IEPL lines are a popular choice for circumvention service providers. The provider sets up a proxy in an IEPL-enabled data centre within the border; users connect to that proxy; traffic crosses the border over IEPL to an endpoint abroad with reliable speed and no GFW interference; and from the foreign endpoint, traffic is relayed to its true destination. However, this setup is not risk-free. The authorities are aware of this practice and actively scrutinise users of international private leased lines.

Hysteria

Hysteria’s primary goal is to achieve substantial speed gains over the public internet without relying on private leased lines. Its key insight is that the bandwidth-limiting factor for China’s cross-border internet traffic is often not the network path itself, but TCP’s own congestion control mechanism.

The Problem with TCP Congestion Control

TCP’s congestion control is designed to find the optimal bandwidth dynamically. It starts slow, then gradually increases bandwidth usage until it encounters packet loss, which it treats as a signal of congestion and responds to by multiplicatively reducing throughput. The underlying assumption is that packet loss occurs because the end user is oversubscribing the available bandwidth, and that reducing the sending rate will relieve the congestion.

This is the wrong assumption for China’s international internet. Packet loss occurs at the international gateways and the GFW, which are perpetually congested and always introducing drops. For a single user, reducing bandwidth does virtually nothing to alleviate that congestion – the bandwidth consumed by one user is a tiny fraction of the total capacity the gateway must handle, and some of the losses are introduced by the GFW regardless. TCP’s congestion control simply throttles the end user’s bandwidth without providing any meaningful benefit.

This has been a known problem for a long time, but there were no effective solutions because congestion control is a core part of the TCP protocol with no room for customisation. QUIC changed this. QUIC not only implements its own congestion control but – crucially – allows applications to customise it. This is the primary reason Hysteria chose QUIC: to implement its own congestion control mechanism, named Brutal.

Brutal Congestion Control

Brutal flips the congestion control model that TCP adopts. It expects the user to specify a fixed target bandwidth and simply transmits at that rate. When packet loss occurs, it does not back off – instead, it increases the sending rate to offset the impact of the lost packets. For example, if 20% of packets are being lost, it sends at 1.25× the target rate to maintain the effective bandwidth. In that sense, Brutal is not congestion control at all – it is congestion ignorance.

Brutal is an extreme design and inherently unfair, completely disregarding the congestion signals that allow TCP flows to share a bottleneck cooperatively. It works well when packet losses on the network are purely random or structural (as at China’s international gateways), but it is harmful when there is genuine congestion, because it simply makes the congestion worse. It is also dangerous if adopted widely – if every flow ignores congestion signals and bombards the network, the result could be congestion collapse. Brutal is intended to be used only when the user knows the exact available bandwidth and accepts the trade-offs.

BBR Congestion Control

Hysteria uses BBR as its default congestion control. BBR – Bottleneck Bandwidth and Round-trip propagation time – is a congestion control algorithm created by Google and widely deployed in their own QUIC infrastructure. Instead of blindly treating packet loss as a signal of congestion, BBR continuously builds a model of the network path by measuring two signals: round-trip time and acknowledgement rate.

When a connection is established, similar to TCP congestion control, the sending rate starts low and grows exponentially. Unlike TCP, which halts growth when a packet loss occurs, BBR stops growing when it observes that the acknowledgement rate is no longer increasing proportionally with the sending rate – indicating that the available bandwidth is saturated. It then enters a steady state, maintaining the sending rate at its estimated upper bound based on measured bandwidth and RTT. BBR uses RTT as its primary signal for congestion: if RTT grows significantly, it indicates queuing on the network, and BBR adjusts accordingly.

BBR is a much better fit for traffic crossing the Chinese border because it does not treat packet loss as a congestion signal. This is the key reason why Hysteria achieves significantly better network performance than its TCP-based peers.

QUIC Proxy

Aside from its congestion control innovations, Hysteria follows the standard good practices of modern censorship-resistant proxies, closely resembling its TLS-based siblings. The client establishes a QUIC connection with the server – everything within the channel is fully encrypted by QUIC’s built-in TLS 1.3. The client then authenticates with a password by sending an HTTP/3 request. Once authentication succeeds, Hysteria treats the QUIC connection as a raw bidirectional pipe, proxying TCP streams over it. The client opens a new QUIC stream for every TCP connection, and QUIC automatically handles the multiplexing. If authentication fails, Hysteria can be configured with HTTP/3 masquerading, where it behaves exactly like a standard HTTP/3 server to resist active probes.

Closing Thoughts

The arms race between the GFW and circumvention tools is not just about protocol-level obfuscation – it is also about performance and user experience. Ultimately, technology serves people, and good technology needs to provide a good experience. Hysteria’s popularity is a strong demonstration of that principle. As the internet continues to evolve and circumvention tools continue to leverage increasingly modern and secure protocols, the race is gradually shifting to a new dimension.

References

- Jana Iyengar, and Martin Thomson. QUIC: A UDP-Based Multiplexed and Secure Transport. RFC 9000. https://datatracker.ietf.org/doc/html/rfc9000

- Jana Iyengar, and Ian Swett. QUIC Loss Detection and Congestion Control. RFC 9002. https://datatracker.ietf.org/doc/html/rfc9002

- M. Allman, V. Paxson, and E. Blanton. TCP Congestion Control. RFC 5681. https://www.rfc-editor.org/rfc/rfc5681.html

- xeovo. Meet the Developer: Hysteria. https://hub.xeovo.com/posts/127-meet-the-developer-hysteria

- Ali Zohaib, Qiang Zao, Jackson Sippe, Abdulrahman Alaraj, Amir Houmansadr, Zakir Durumeric, and Eric Wustrow. Exposing and Circumventing SNI-based QUIC Censorship of the Great Firewall of China. 34th USENIX Security Symposium (USENIX Security ’25). https://www.usenix.org/conference/usenixsecurity25/presentation/zohaib

- apernet. Hysteria. https://github.com/apernet/hysteria

- Y. Cui, T. Li, C. Liu, X. Wang and M. Kühlewind. Innovating Transport with QUIC: Design Approaches and Research Challenges. IEEE Internet Computing. https://ieeexplore.ieee.org/document/7867726

- Yuri Nikolaenko. The Rising Dominance of QUIC. https://www.bcsatellite.net/blog/the-rising-dominance-of-quic/

- Neal Cardwell, Yuchung Cheng, C. Stephen Gunn, Soheil Hassas Yeganeh, and Van Jacobson. BBR: Congestion-Based Congestion Control. ACM Queue. http://queue.acm.org/detail.cfm?id=3022184

- Neal Cardwell, and Yuchung Cheng. Google Cloud. TCP BBR congestion control comes to GCP – your Internet just got faster. https://cloud.google.com/blog/products/networking/tcp-bbr-congestion-control-comes-to-gcp-your-internet-just-got-faster

Enjoy Reading This Article?

Here are some more articles you might like to read next: