GFW Technical Review 01 – Architecture

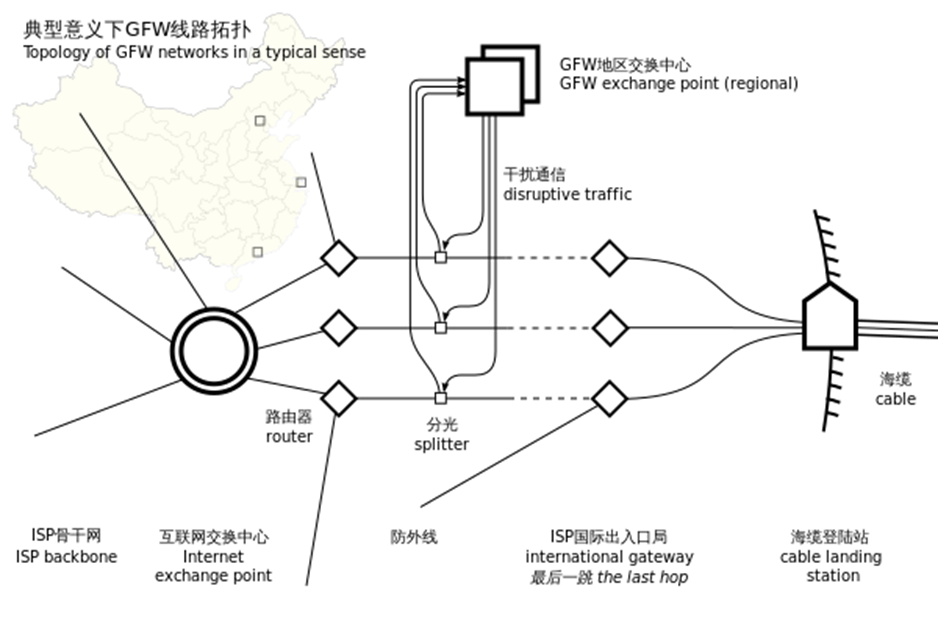

In the late 1990s and early 2000s, China’s Internet infrastructure expanded rapidly. As connectivity grew, policymakers sought mechanisms to manage cross-border information flows in line with evolving legal and regulatory requirements. The physical layout of the early Chinese Internet made this goal technically achievable: most international traffic passed through a handful of centralized exchange points. These “choke points” provided natural locations for introducing filtering technologies without redesigning the entire domestic network.

The earliest versions of the Great Firewall (GFW) were built around these critical international gateways. At these points, border routers could be configured with access-control rules, external filtering appliances could copy and inspect traffic, packets could be injected to interrupt connections, and centralized controllers could coordinate policies across carriers. Backbone operators – large state-affiliated telecommunications companies – maintained this infrastructure in coordination with newly established regulatory entities responsible for information control.

Even at this early stage, GFW was never a single firewall appliance. It would be technically impossible to enforce broad, heterogeneous policies across all cross-border traffic using a single device or application. From the beginning, the GFW was a large-scale distributed system: a collection of loosely coupled components that cooperated to achieve nationwide filtering goals. Among these components, three mechanisms eventually emerged as foundational pillars of its architecture.

IP Address Blocking

Routers at backbone or gateway points can maintain ACLs and simply drop packets to and from specific IP ranges. This method is reliable – routers almost never misapply an ACL – and computationally cheap. However, the operational burden shifts to the operator, who must maintain a constantly updated list of target IP addresses. This is challenging because the number of websites is enormous, and IP addresses frequently change. IP-based blocking also tends to over-block, particularly for shared hosting providers or CDNs where many domains share the same address.

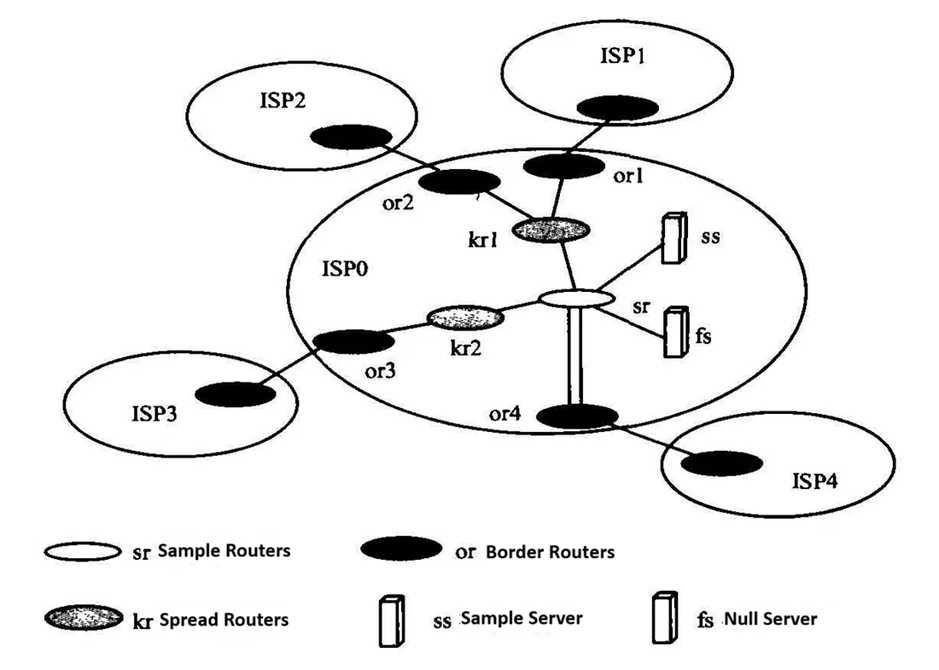

Even though ACL-based blocking is computationally inexpensive, maintaining extremely large ACLs on a small number of backbone routers becomes impractical. To address this, the GFW uses null routing, which distributes the blocking burden across the entire domestic network. Understanding null routing requires a quick look at how routers communicate. Routers share routing information through BGP, a protocol that lets routing announcements propagate automatically across the network. Critically, this system is based on trust: routers assume the information they receive from peers is correct.

This trust relationship gives the GFW an opportunity. By injecting a false BGP announcement for an IP address it wants to block – advertising a route that leads nowhere – the GFW causes the entire network to route packets for that destination into a “black hole.” In other words, incorrect routing information propagates through the network until every router collectively drops traffic to the targeted IP address. This systemwide null route is both efficient and very difficult for typical users to work around.

Port Blocking

In addition to filtering based on destination IP addresses, backbone routers can also block traffic targeting specific destination ports. On Cisco equipment, this capability is typically implemented through ACL-Based Forwarding (ABF). Port-level blocking gives operators more granular control, since many IP addresses host multiple services. Blocking an entire IP could unintentionally disrupt unrelated applications, whereas selectively filtering ports allows censorship systems to impair only the targeted service while leaving others reachable.

DNS Poisoning

IP blocking is operationally expensive and often unreliable because IP addresses change frequently. Domain names, however, tend to remain stable. DNS interference therefore became a more desirable and scalable technique.

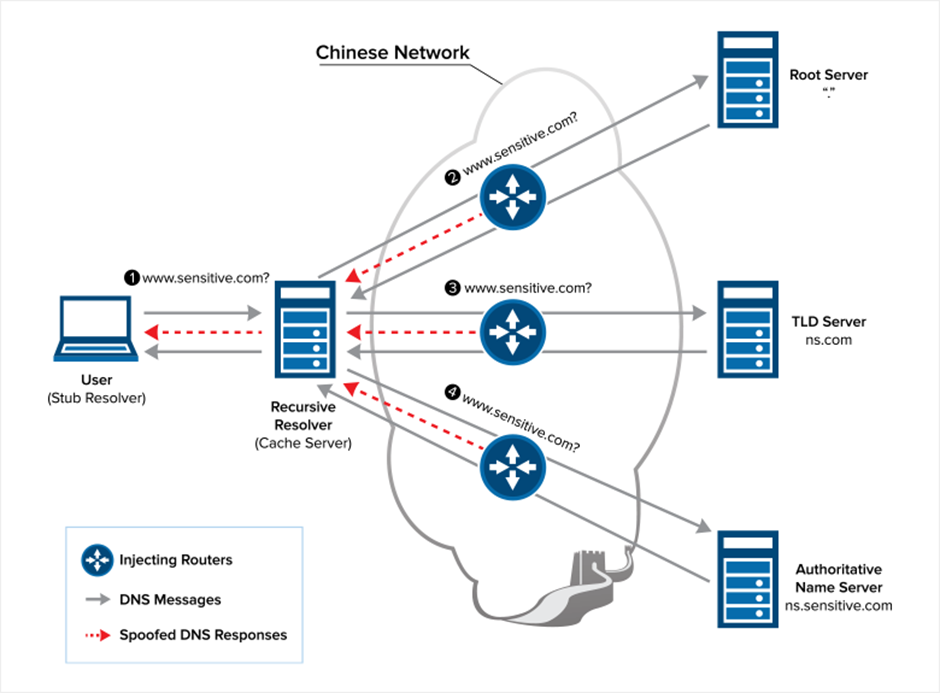

The GFW can poison domestic DNS resolvers by providing forged responses. Because DNS has a hierarchical, cache-driven design, an incorrect resolution at the root or ISP level quickly propagates through caches and eventually contaminates the entire domestic DNS ecosystem.

In principle, users could bypass poisoned resolvers by querying foreign DNS servers. To counter this, the GFW injects forged DNS responses when it detects outbound DNS queries crossing the border. These forged responses are almost always faster than the legitimate ones—because they are injected locally rather than traveling to Japan, the U.S., or elsewhere, so DNS clients accept the forged record and ignore the genuine response that arrives later.

Keyword-Based Filtering (Early DPI)

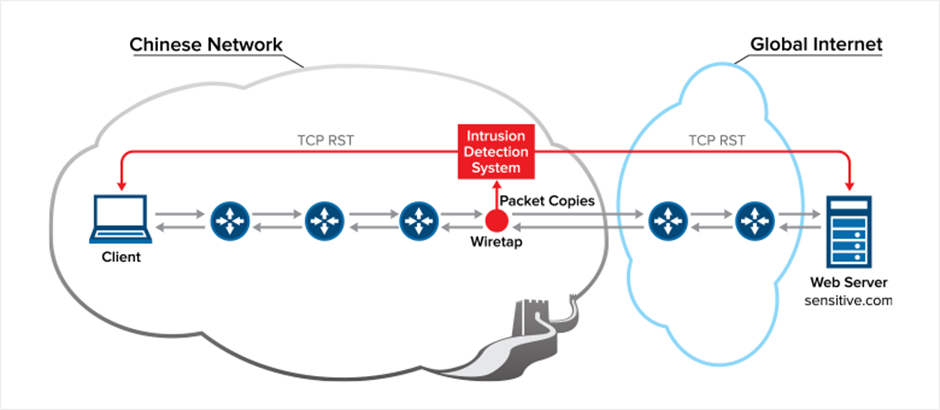

In the early days of the Internet, most traffic was plaintext. This allowed the GFW to implement straightforward pattern matching within TCP streams. To do this, operators tapped the cross-border choke points and captured a copy of all international traffic off-path. The traffic was sharded and distributed to a large server farm performing early forms of Deep Packet Inspection (DPI).

These DPI systems could scan unencrypted HTTP requests and match specific keywords. They could also perform limited stateful inspection, tracking the progress of a TCP stream and identifying behavioral patterns, though this was more computationally expensive. In practice, early DPI deployments were mostly stateless.

When a DPI device decided a connection violated policy, it injected forged TCP RST packets to both endpoints, abruptly and falsely terminating the connection. This behavior became one of the earliest and most recognizable signatures of the GFW in action.

A Distributed, Multi-Layer Design

A core design philosophy of the GFW is that it should be distributed and multi-layered rather than monolithic. This principle is visible in both null routing and DNS poisoning: instead of concentrating all filtering at the border, responsibility is pushed outward into the network. Over time, this logic extended to DPI as well. Rather than perform all heavy inspection at international gateways – which would create massive bottlenecks – ISPs gradually took on more of this responsibility within the domestic backbone. This support for regional enforcement also enabled more flexible or region-specific policies, such as those observed in Xinjiang in recent years.

This distributed approach provides scalability, fault-tolerance, and policy flexibility – properties that would be impossible with a centralized firewall.

Operational Challenges

Despite the conceptual simplicity of each mechanism, GFW faces significant operational challenges. The most persistent issue is how to minimize false positives. Conceptually, the GFW performs a binary classification task: determine whether a given connection violates policy. No classifier is perfect. IP lists become outdated, DPI may match the wrong substring, and legitimate flows can resemble disallowed ones.

Like many classifiers, the GFW must balance precision and recall. Historically, it has been tuned toward high precision, prioritizing low false-positive rates even if that allows more false negatives. This balance, however, is adjustable. Operators can and do modify sensitivity, and during politically sensitive periods, the system has often behaved more aggressively.

Leakage is another significant challenge. Any “false information” introduced by the GFW -such as poisoned DNS records or null routes – must stay strictly within national borders. Otherwise, it risks spreading through the global routing or DNS ecosystem and causing collateral damage. A well-known example occurred in 2010, when a Chilean DNS operator observed incorrect resolutions for domains like Facebook, YouTube, and Twitter. The issue was traced back to DNS queries inadvertently routed through China; the GFW injected forged responses, which then leaked outside the border due to insufficient direction-checking at the time.

Observable Characteristics

Because the GFW’s architecture is not publicly documented, much of what is known comes from researchers and users examining externally visible behavior. Common observations include:

- TCP RST injections: forged packets abruptly terminating connections.

- Silent timeouts: connections stall indefinitely, often a signature of IP- or route-based blocking.

- Forged DNS records: detectable when users manually compare DNS results or modify resolver settings.

- Asymmetric behavior: outbound and inbound traffic may be treated differently, a pattern observed in various academic measurements.

These behaviors collectively hint at a complex, distributed filtering system rather than a single filtering device.

Closing Thoughts

The GFW began as a collection of simple, distributed mechanisms built around natural network choke points. Over time, these mechanisms have been refined, scaled, and partially decentralized, forming the basis of one of the most sophisticated national-level filtering architectures in the world. In the next post, we will shift perspective to the client side, examining how early VPN protocols appeared “on the wire” and how their design sparked a long and evolving interplay between censorship systems and circumvention technologies.

References

- The Great Firewall of China. https://www.wired.com/1997/06/china-3/

- Cisco Leak: ‘Great Firewall’ of China Was a Chance to Sell More Routers. https://www.wired.com/2008/05/leaked-cisco-do/

- Liu, G., Yun, X., Fang, B., Hu, M. 一种基于路由扩散的大规模网络控管方法. Journal of China Institute of Communications. https://www.docin.com/p-1321809121.html

- Global Internet Freedom Consortium. China Greate Firewall Revealed. http://www.internetfreedom.org/files/WhitePaper/ChinaGreatFirewallRevealed.pdf

- Deconstructing Great Firewall China. https://www.thousandeyes.com/blog/deconstructing-great-firewall-china

- Daniel Anderson. Splinternet Behind the Great Firewall of China. ACM Queue. https://queue.acm.org/detail.cfm?id=2405036

- Anomalous behavior of the DNS on March 24th, 2010. https://www.nic.cl/anuncios/20100329-rootI-eng.html

- Anonymous. Towards a Comprehensive Picture of the Great Firewall’s DNS Censorship. 4th USENIX Workshop on Free and Open Communications on the Internet. https://www.usenix.org/system/files/conference/foci14/foci14-anonymous.pdf

- Richard Clayton, Steven J. Murdoch, and Robert N. M. Watson. Ignoring the Great Firewall of China. https://www.cl.cam.ac.uk/~rnc1/ignoring.pdf

- Jedidiah R. Crandall, Daniel Zinn, Michael Byrd, Earl Barr, Rich East. ConceptDoppler: A weather tracker for internet censorship. Proceedings of the 2007 ACM Conference on Computer and Communications Security. https://www.cs.unm.edu/~crandall/concept_doppler_ccs07.pdf

- Sparks, Neo, Tank, Smith, Dozer. The Collateral Damage of Internet Censorship by DNS Injection. ACM SIGCOMM Computer Communication Review. https://conferences.sigcomm.org/sigcomm/2012/paper/ccr-paper266.pdf

- Xueyang Xu, Z. Morley Mao, and J. Alex Halderman. Internet censorship in china: where does the filtering occur? Proceedings of the 12th international conference on Passive and active measurement (PAM’11). https://dl.acm.org/doi/10.5555/1987510.1987524

- 深入理解GFW: 内部结构. http://gfwrev.blogspot.com/2010/02/gfw.html

- 深入理解GFW: DNS污染. http://gfwrev.blogspot.com/2009/11/gfwdns.html

Enjoy Reading This Article?

Here are some more articles you might like to read next: